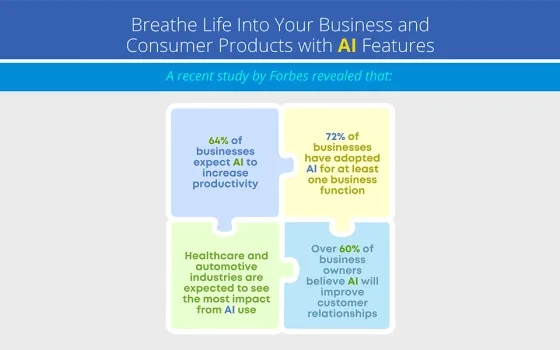

In a world where 78% of organizations now use AI in at least one business function—up from 55% just a year ago—the question isn't whether your enterprise should adopt AI, but how quickly you can implement it effectively.

Picture this: Your development team needs to integrate natural language processing into your application. Five years ago, this would have meant months of research, data collection, model training, and countless iterations. Today? You can have a production-ready solution running in hours, not months. Welcome to the era of AI model libraries—the democratizing force that's putting enterprise-grade artificial intelligence at every developer's fingertips.

The AI Revolution: By the Numbers

The numbers paint a compelling picture. According to Stanford's 2025 AI Index Report, nearly 90% of notable AI models in 2024 came from industry, representing a massive shift from 60% in 2023. This industrial focus has created an ecosystem where pre-trained models are not just available—they're becoming the standard approach to AI implementation.

Consider Hugging Face, the GitHub of AI models, which now hosts over 1.7 million models, 400,000 datasets, and 600,000 demo applications. With 28.81 million monthly visits and users spending an average of 10 minutes and 39 seconds per session, it's clear that the developer community has embraced the model library approach.

What Exactly is an AI Model Library?

An AI model library is essentially a curated repository of pre-trained machine learning models that developers can integrate into their applications without building from scratch. Think of it as a comprehensive toolkit where each tool (model) has been crafted, tested, and optimized for specific tasks.

These libraries serve multiple purposes:

Pre-trained Models: Models that have already been trained on massive datasets and can be used immediately or fine-tuned for specific use cases.

Transfer Learning Hub: A starting point where you can take a model trained on one task and adapt it to your specific needs with minimal additional training.

Version Control for AI: Like Git for code, these libraries provide versioning, documentation, and collaborative features specifically designed for machine learning models.

Standardized APIs: Consistent interfaces that make it easy to swap models or experiment with different approaches without rewriting your entire application.

The Technical Architecture

At their core, AI model libraries operate on several key principles:

Standardization: Models are packaged using consistent formats like ONNX (Open Neural Network Exchange), TensorFlow SavedModel, or PyTorch's TorchScript. This standardization ensures interoperability across different frameworks and deployment environments.

Versioning and Governance: Enterprise-grade libraries implement semantic versioning, allowing teams to manage model updates without breaking existing integrations. For instance, Hugging Face Transformers maintains over 150,000 model variants with comprehensive versioning.

Optimization Pipelines: Many libraries include pre-optimized model variants. NVIDIA's TensorRT can accelerate inference by up to 40x compared to native PyTorch implementations, while maintaining accuracy within 1% of original models.

Metadata and Provenance: Professional libraries include detailed metadata about training data, performance metrics, licensing, and ethical considerations—critical for enterprise compliance and risk management.

The Landscape of AI Model Libraries

Open-Source Ecosystem Leaders

Hugging Face Hub

The undisputed leader in the space, Hugging Face has become synonymous with accessible AI. Their transformer library has democratized natural language processing, while their hub serves as the central repository for the community.

Key Statistics:

- Over 1.7 million models available

- 400,000+ datasets

- Support for 10+ machine learning libraries

- Models downloaded billions of times monthly

TensorFlow Hub

Google's official model repository focuses heavily on TensorFlow models, offering everything from image classification to text embeddings.

PyTorch Hub

Meta's PyTorch ecosystem provides a seamless way to discover and load models directly in Python code.

Model Zoo Collections

Various specialized repositories like OpenMMLab, NVIDIA NGC, and others focus on specific domains like computer vision or conversational AI.

Enterprise and Cloud Platforms

AWS SageMaker JumpStart provides over 600 pre-trained models with one-click deployment capabilities. Amazon reports that customers using JumpStart reduce time-to-market by an average of 60% compared to building models from scratch.

Google Cloud Model Garden integrates with Vertex AI, offering enterprise-grade models with built-in MLOps capabilities, monitoring, and governance frameworks.

Azure Machine Learning Model Catalog focuses on enterprise compliance, providing models that meet SOC 2, HIPAA, and GDPR requirements out-of-the-box.

The Technical Architecture Behind Model Libraries

Modern AI model libraries are built on several key technical foundations:

Model Serialization and Format Standards

Most libraries use standardized formats like ONNX (Open Neural Network Exchange), PyTorch's .pth files, or TensorFlow's SavedModel format. These formats ensure models can be loaded and executed across different frameworks and platforms.

Metadata and Model Cards

Each model comes with comprehensive metadata including:

- Performance metrics on benchmark datasets

- Training data information and potential biases

- Recommended use cases and limitations

- Hardware requirements and inference specifications

- Licensing information

Container and Cloud Integration

Many libraries now provide Docker containers and cloud deployment templates, making it trivial to deploy models to AWS, Google Cloud, or Azure with a single command.

The Business Impact: ROI of Ready-to-Use Models

Development Time Reduction

Salesforce's survey reveals that 67% of IT leaders have prioritized generative AI for their business within the next 18 months, largely due to the rapid implementation capabilities of pre-trained models. Organizations report reducing AI project timelines from 12-18 months to 2-4 months when using model libraries.

Cost Efficiency

Training large language models from scratch can cost millions of dollars. GPT-3's training alone was estimated at $4.6 million in compute costs. By using pre-trained models, enterprises can achieve 80-95% of custom model performance at less than 5% of the development cost.

Talent Accessibility

With 13% of organizations surveyed by McKinsey reporting adoption of generative AI into their software engineering function, pre-trained models are enabling companies to implement AI solutions without requiring PhD-level expertise in machine learning.

Implementation Strategies for Enterprise Adoption

Architectural Patterns

Model-as-a-Service (MaaS): Deploy models behind API gateways for centralized management. This pattern enables A/B testing, gradual rollouts, and consistent monitoring across applications.

Embedded Inference: For latency-critical applications, embed optimized models directly in applications. Edge deployment of libraries like TensorFlow Lite can achieve sub-10ms inference times.

Hybrid Approaches: Combine multiple models for complex workflows. For example, a document processing pipeline might use separate models for OCR, classification, and entity extraction, all sourced from different libraries.

Security and Governance Framework

Enterprise implementations require robust security measures:

Model Provenance Tracking: Implement systems to track model origins, training data sources, and modification history. This becomes critical for audit trails and compliance requirements.

Access Control: Deploy role-based access controls for model deployment and updates. Many organizations implement approval workflows for production model changes.

Data Privacy: Ensure models comply with data residency requirements. European enterprises often require models trained without personal data or with appropriate anonymization techniques.

Future Trends and Emerging Technologies

Multimodal Model Integration

The next generation of model libraries focuses on multimodal capabilities. Models like CLIP (Contrastive Language-Image Pre-training) enable unified text and image understanding, opening new application possibilities in content management and search.

Edge-Optimized Models

As edge computing grows, libraries increasingly provide models optimized for resource-constrained environments. Apple's Core ML and Google's MediaPipe offer models that run efficiently on mobile devices while maintaining near-cloud accuracy.

Automated Model Selection

Emerging platforms use AutoML techniques to automatically select optimal models from libraries based on specific requirements. Google's AutoML Tables can evaluate dozens of model architectures and select the best performer for tabular data problems.

Federated Learning Integration

Future model libraries will likely incorporate federated learning capabilities, enabling organizations to benefit from collaborative training while maintaining data privacy. This approach could revolutionize model development in regulated industries.

Best Practices and Implementation Guidelines

Development Workflow Integration

Continuous Integration: Integrate model updates into CI/CD pipelines using tools like MLflow or Kubeflow. Automated testing should validate model performance on representative datasets before production deployment.

Version Control: Treat models as code artifacts with proper versioning. Git LFS or specialized tools like DVC (Data Version Control) enable effective model version management.

Monitoring and Alerting: Implement comprehensive monitoring for model drift, performance degradation, and resource utilization. Prometheus and Grafana provide effective monitoring stacks for ML workloads.

Team Structure and Skills

Cross-Functional Teams: Successful implementations require collaboration between data scientists, ML engineers, and software developers. Organizations report 50% higher success rates with dedicated MLOps teams.

Training and Upskilling: Invest in team education on model library ecosystems. Companies like Netflix dedicate their engineering time to learning new AI/ML technologies and tools.

Documentation and Knowledge Sharing: Maintain comprehensive documentation of model selection rationale, performance characteristics, and deployment configurations. This becomes critical as teams scale.

Conclusion: Embracing the AI Model Library Revolution

AI model libraries represent more than just a development convenience—they're a strategic enabler of digital transformation. Organizations that effectively leverage these resources can accelerate innovation, reduce costs, and compete more effectively in AI-driven markets.

The key to success lies not in simply adopting these libraries, but in building the organizational capabilities to evaluate, integrate, and manage them effectively. This includes developing robust governance frameworks, establishing performance monitoring systems, and fostering cross-functional collaboration between technical and business teams.

As the AI landscape continues to evolve at an unprecedented pace, model libraries will become increasingly sophisticated, offering more specialized capabilities, better optimization, and seamless integration with enterprise systems. The question isn't whether to adopt AI model libraries—it's how quickly your organization can build the expertise to leverage them effectively.

The companies that master this capability today will be the ones that define the competitive landscape tomorrow. The tools are ready, the models are trained, and the infrastructure is in place. The only remaining question is: what will you build?

Reference Link

- Stanford HAI. (2025). The 2025 AI Index Report. https://hai.stanford.edu/ai-index/2025-ai-index-report

- McKinsey & Company. (2025). The state of AI: How organizations are rewiring to capture value. https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

- Hugging Face. (2024). Hugging Face Hub documentation. https://huggingface.co/docs/hub/en/index

- Salesforce. (2025). Generative AI Statistics for 2024. https://www.salesforce.com/news/stories/generative-ai-statistics/

- Founders Forum Group. (2025). AI Statistics 2024–2025: Global Trends, Market Growth & Adoption Data. https://ff.co/ai-statistics-trends-global-market/

Orca Security. (2024). 10 Most Popular AI Models of 2024. https://orca.security/resources/blog/top-10-most-popular-ai-models-2024/